This work improved object detection in remote sensing imagery, where targets are often small, dense, and difficult to localize consistently across large aerial scenes.

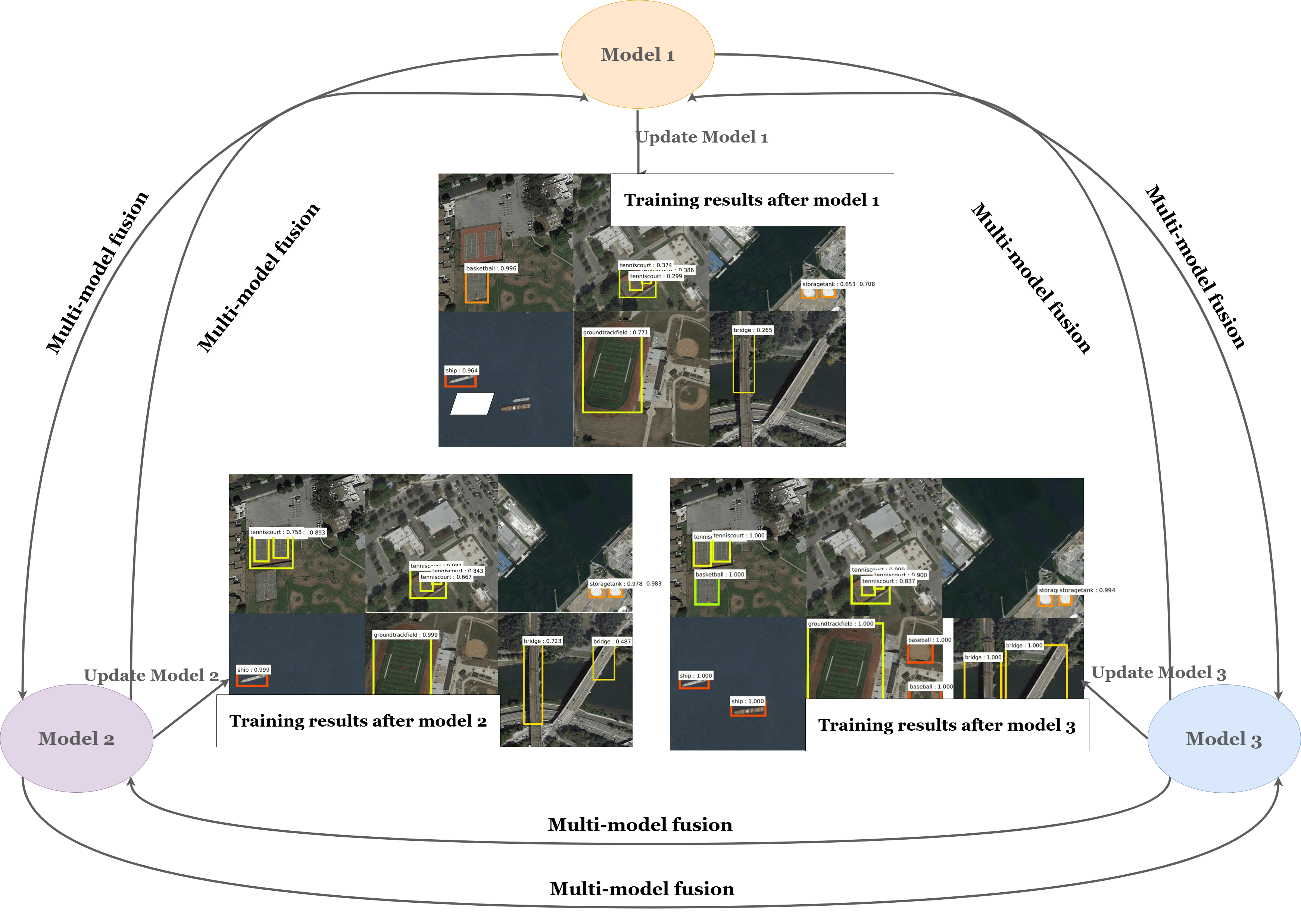

Objective: Improve remote sensing object detection through multi-model fusion, with a focus on more reliable localization and classification under challenging image conditions.

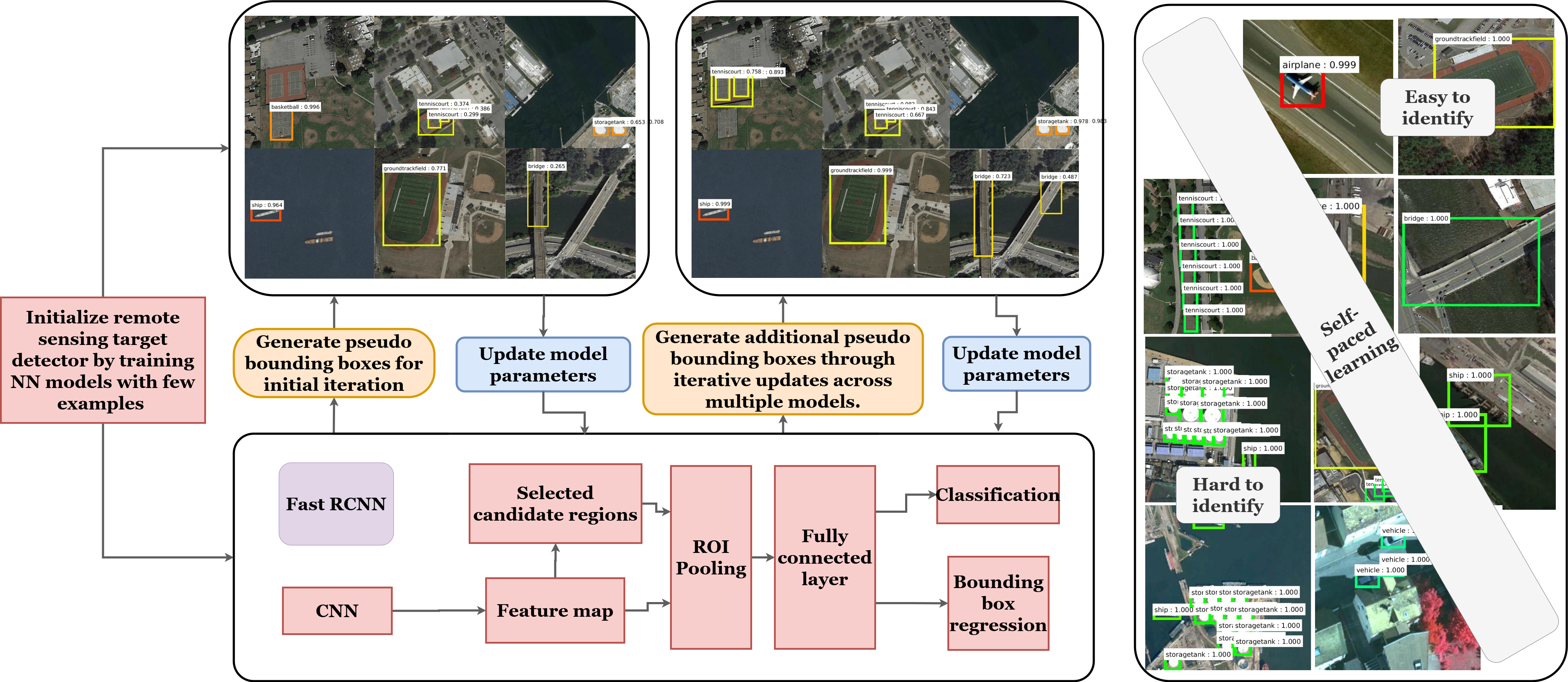

Multi-Model Fusion: Combines Fast R-CNN & R-FCN to improve accuracy by leveraging the complementary strengths of both detectors. The framework uses minimal bounding-box information for initialization and refinement, supporting more stable proposals and stronger final localization.

Self-Paced Learning: Training progressed from easier, higher-confidence samples to more ambiguous cases, improving stability and generalization.

Impact: The pipeline improved robustness across scale variation, cluttered backgrounds, and sparse visual cues in geospatial vision tasks.